The Reference Interview You're Not Having with ChatGPT

How to figure out what you actually need before asking AI for anything.

Last week, my colleague asked ChatGPT to “create a performance review template.” Twenty minutes later, she was practically crying in our Zoom call. “It gave me this generic corporate nightmare,” she said, sharing her screen. “Like something from a company that makes employees rank each other Hunger Games style.”

I asked her three questions: Who’s this for? What specific behaviors are you trying to assess? What happened with your last template that made you want a new one?

Turns out, she needed a review format for creative freelancers that focused on project outcomes rather than hours logged. She wanted something that captured artistic growth without sounding patronizing. The old template was designed for full-time employees and kept asking about “punctuality” and “dress code adherence” for people who work from home in their pajamas.

We rewrote her prompt. ChatGPT delivered exactly what she needed. The difference? We did a reference interview first.

The Questions You’re Not Asking Yourself

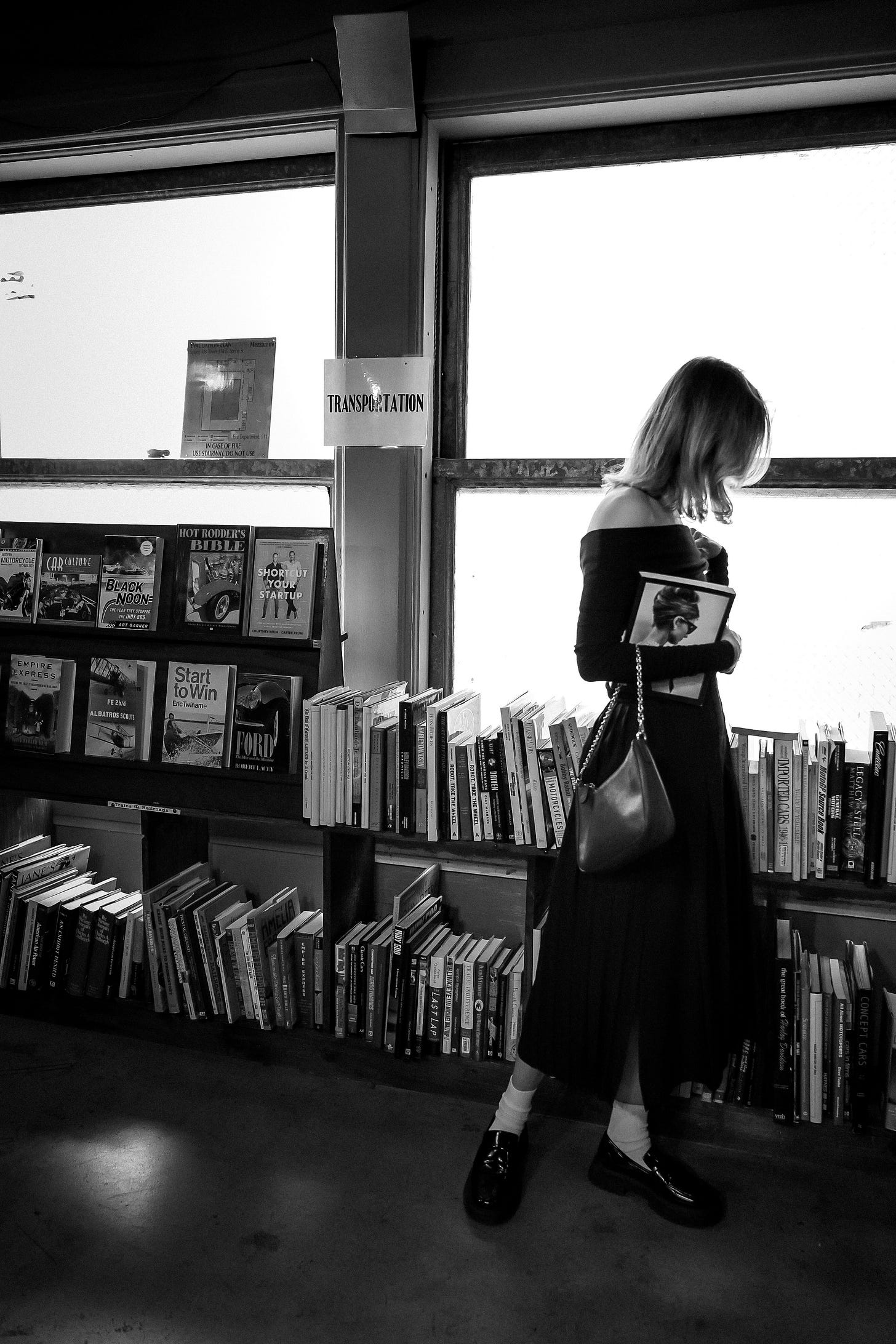

Remember when I mentioned that librarians never take the first question at face value? There’s actually a formal process for this. The reference interview. It’s a structured conversation designed to uncover what someone really needs versus what they think they want. And here’s the kicker: you can do it to yourself before you ever open ChatGPT.

📖 Librarian Dictionary:

reference interview (n.)

/ˈref(ə)rəns ˈintərvjuː/Library Science. The structured conversation between a librarian and patron where the librarian plays detective to figure out what the person actually needs, because what they asked for is almost never what they want. Classic example: Patron asks for “books about dogs.” Librarian discovers they need three peer-reviewed sources on canine behavior modification for aggressive rescue dogs, due tomorrow, APA format, minimum five years recent.

Origin: Formalized in library science programs circa 1970s, though librarians have been doing this since Alexandria. Now applicable to any situation where someone’s first question is definitely not their real question.

See also: what your therapist does, what you should do before prompting ChatGPT, why “I’m fine” means anything but.

The classic reference interview follows a pattern that feels almost therapeutic. (My therapist friends find this hilarious.) You start with the presenting question, then dig into context, constraints, and ultimate purpose. It’s like being your own detective, except instead of solving crimes, you’re solving your own poorly articulated needs.

Here’s what actually happens when I watch people prompt AI. They type something like “meal plan for the week” and get back a meal plan that assumes they have two hours every evening, access to specialty ingredients, and the knife skills of a sushi chef. Then they blame the AI.

But watch what happens when you interview yourself first:

What meals am I actually capable of making?

How much time do I really have? (Not aspirational time. Real time.)

What’s already in my pantry that needs using?

What happened to my last meal plan? Why did it fail?

Who else am I feeding and what won’t they eat?

Suddenly “meal plan for the week” becomes “5 dinners, 30 minutes max, one-pot meals, using chicken thighs and whatever vegetables survive in my crisper drawer, for two adults who hate cilantro, with leftovers for lunch.”

The CLEAR Framework (Or How Librarians Have Been Prompt Engineering Since Forever)

Dr. Leo S. Lo, Dean of Libraries and Advisor for AI Literacy at the University of Virginia, formalized what librarians have been doing intuitively. The CLEAR framework isn’t just another acronym. It’s literally how we’ve taught database searching for decades, just translated for AI.

Concise doesn’t mean short. It means no unnecessary words clouding your actual need. “I need help with my dissertation” versus “I need to identify peer-reviewed studies from 2020-2024 on remote work’s impact on junior developer productivity, excluding studies focused solely on pandemic response.”

Logical means structuring your request the way you’d structure an argument. Context first, then specific need, then constraints, then desired format. Not a stream-of-consciousness dump of everything in your brain.

Explicit is where people fumble most. You want a blog post? Say how long. What tone. What reading level. Whether you want citations. ChatGPT can’t read your mind, despite what Silicon Valley wants you to believe.

Adaptive acknowledges that your first prompt will probably suck. That’s not failure. That’s iteration. Librarians don’t nail the perfect database search on the first try. We refine, narrow, expand, pivot. Same with AI.

Reflective might be the most important and most ignored. Actually read what the AI gave you. Think about why it’s wrong. Because something will be wrong. Is it wrong because your prompt was vague? Because the AI lacks context? Because you asked for something inherently contradictory? The failure points teach you more than the successes.

Real Prompts That Failed (And Why)

Let me show you actual prompts from my work Slack, stripped of identifying details but with all their glorious failure intact:

“Write a creative brief”

What the AI heard: Generate generic marketing template

What they needed: A brief for a specific campaign targeting Gen Z runners for a sustainable shoe brand launching in spring

“Help me with employee onboarding”

What the AI heard: Create a comprehensive onboarding checklist

What they needed: Three email templates for remote creative contractors’ first week, focusing on tool access and project management systems

“Summarize this meeting”

What the AI heard: Create bullet points of everything discussed

What they needed: Extract three action items with owners and deadlines, plus any budget implications

See the pattern? Every failed prompt skipped the reference interview. They went straight from vague need to AI query without the crucial middle step of figuring out what success actually looked like.

The Practice Session Nobody Tells You About

Before librarians are allowed anywhere near actual patrons, we practice reference interviews on each other. We roleplay increasingly ridiculous scenarios. (I once had to help a fake patron find “that blue book about the thing with the guy.”)

You need the same practice with AI prompting. Start with something low-stakes. Maybe a thank-you note or a recipe modification. Interview yourself:

Who’s the recipient and what’s our relationship?

What specific thing am I thanking them for?

What tone reflects our dynamic?

What outcome do I want from this note?

Then write your prompt. Then revise it based on what you get back. Then notice what you’re learning about how to communicate with this particular kind of intelligence.

The goal isn’t perfect prompts. It’s developing an intuition for what information the AI needs to help you effectively. It’s learning to recognize your own unstated assumptions and make them explicit.

What We’re Really Talking About Here

This isn’t actually about AI at all. (Plot twist.) It’s about clarifying our own thinking. The reference interview forces us to articulate what we really want versus what we reflexively ask for. AI just happens to be mercilessly literal about giving us exactly what we request, making our fuzzy thinking impossible to ignore.

Every time ChatGPT gives you garbage, it’s holding up a mirror to the clarity of your request. That’s uncomfortable. It’s also incredibly useful.

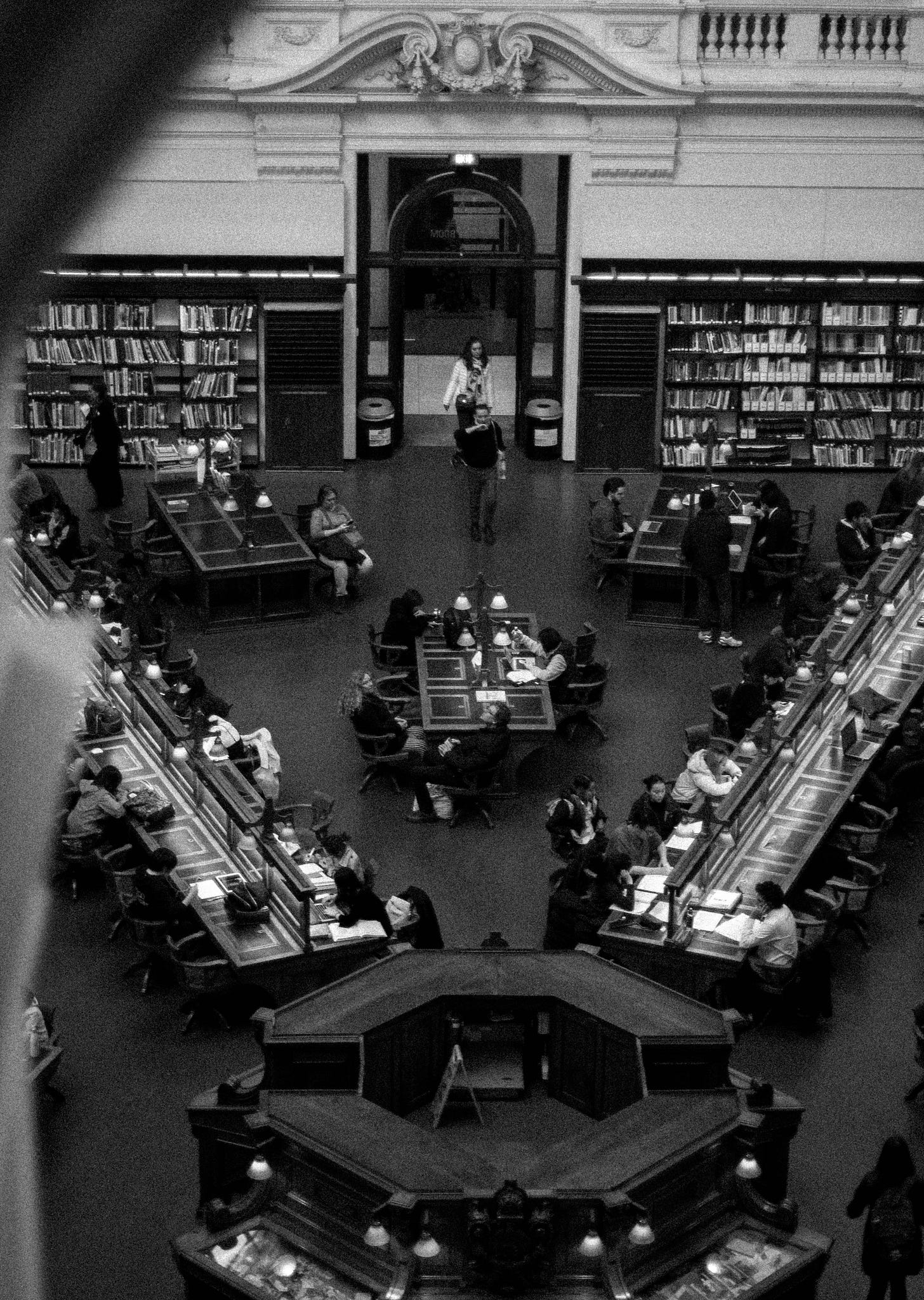

When librarians taught people to search databases, we weren’t really teaching them about Boolean operators and truncation symbols. We were teaching them to think systematically about information needs. The database was just the vehicle. Now AI is the vehicle, but the skill remains the same: knowing what you actually need before you start looking for it.

Next week, we’re going deep on source evaluation in the age of infinite content generation. I’ll show you how librarians spot fake citations, fabricated quotes, and hallucinated facts (and how you can too). Plus, why AI’s confidence score of 100% on completely made-up information might actually be a feature, not a bug, if you know how to use it.

Subscribe if you want to learn the verification tricks librarians use that would make fact-checkers weep with joy. Because if AI is going to make things up, we might as well get good at catching it in the act.